This CSS code defines a custom font family called “Guardian Headline Full” with multiple font weights and styles. It includes light, regular, medium, and semibold weights, each with normal and italic variations. The fonts are loaded from the Guardian’s servers in WOFF2, WOFF, and TrueType formats to ensure compatibility across different browsers.@font-face {

font-family: Guardian Headline Full;

src: url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-Bold.woff2) format(“woff2”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-Bold.woff) format(“woff”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-Bold.ttf) format(“truetype”);

font-weight: 700;

font-style: normal;

}

@font-face {

font-family: Guardian Headline Full;

src: url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-BoldItalic.woff2) format(“woff2”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-BoldItalic.woff) format(“woff”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-BoldItalic.ttf) format(“truetype”);

font-weight: 700;

font-style: italic;

}

@font-face {

font-family: Guardian Headline Full;

src: url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-Black.woff2) format(“woff2”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-Black.woff) format(“woff”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-Black.ttf) format(“truetype”);

font-weight: 900;

font-style: normal;

}

@font-face {

font-family: Guardian Headline Full;

src: url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-BlackItalic.woff2) format(“woff2”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-BlackItalic.woff) format(“woff”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-headline/noalts-not-hinted/GHGuardianHeadline-BlackItalic.ttf) format(“truetype”);

font-weight: 900;

font-style: italic;

}

@font-face {

font-family: Guardian Titlepiece;

src: url(https://assets.guim.co.uk/static/frontend/fonts/guardian-titlepiece/noalts-not-hinted/GTGuardianTitlepiece-Bold.woff2) format(“woff2”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-titlepiece/noalts-not-hinted/GTGuardianTitlepiece-Bold.woff) format(“woff”),

url(https://assets.guim.co.uk/static/frontend/fonts/guardian-titlepiece/noalts-not-hinted/GTGuardianTitlepiece-Bold.ttf) format(“truetype”);

font-weight: 700;

font-style: normal;

}

@media (min-width: 71.25em) {

.content__main-column–interactive {

margin-left: 160px;

}

}

@media (min-width: 81.25em) {

.content__main-column–interactive {

margin-left: 240px;

}

}

.content__main-column–interactive .element-atom {

max-width: 620px;

}

@media (max-width: 46.24em) {

.content__main-column–interactive .element-atom {

max-width: 100%;

}

}

.content__main-column–interactive .element-showcase {

margin-left: 0;

}

@media (min-width: 46.25em) {

.content__main-column–interactive .element-showcase {

max-width: 620px;

}

}

@media (min-width: 71.25em) {

.content__main-column–interactive .element-showcase {

max-width: 860px;

}

}

.content__main-column–interactive .element-immersive {

max-width: 1100px;

}

@media (max-width: 46.24em) {

.content__main-column–interactive .element-immersive {

width: calc(100vw – var(–scrollbar-width, 0px));

position: relative;

left: 50%;

right: 50%;

margin-left: calc(-50vw + var(–half-scrollbar-width, 0px)) !important;

margin-right: calc(-50vw + var(–half-scrollbar-width, 0px)) !important;

}

}

@media (min-width: 46.25em) {

.content__main-column–interactive .element-immersive {

transform: translate(-20px);

width: calc(100% + 60px);

}

}

@media (max-width: 71.24em) {

.content__main-column–interactive .element-immersive {

margin-left: 0;

margin-right: 0;

}

}

@media (min-width: 71.25em) {

.content__main-column–interactive .element-immersive {

transform: translate(0);

width: auto;

}

}

@media (min-width: 81.25em) {

.content__main-column–interactive .element-immersive {

max-width: 1260px;

}

}

.content__main-column–interactive p,

.content__main-column–interactive ul {

max-width: 620px;

}

.content__main-column–interactive:before {

position: absolute;

top: 0;

height: calc(100% + 15px);

min-height: 100px;

content: “”;

}

@media (min-width: 71.25em) {

}.content__main-column–interactive:before {

border-left: 1px solid #dcdcdc;

z-index: -1;

left: -10px;

}

@media (min-width: 81.25em) {

.content__main-column–interactive:before {

border-left: 1px solid #dcdcdc;

left: -11px;

}

}

.content__main-column–interactive .element-atom {

margin-top: 0;

margin-bottom: 0;

padding-bottom: 12px;

padding-top: 12px;

}

.content__main-column–interactive p + .element-atom {

padding-top: 0;

padding-bottom: 0;

margin-top: 12px;

margin-bottom: 12px;

}

.content__main-column–interactive .element-inline {

max-width: 620px;

}

@media (min-width: 61.25em) {

figure[data-spacefinder-role=”inline”].element {

max-width: 620px;

}

}

:root {

–dateline: #606060;

–headerBorder: #dcdcdc;

–captionText: #999;

–captionBackground: hsla(0, 0%, 7%, 0.72);

–feature: #c70000;

–new-pillar-colour: var(–primary-pillar, var(–feature));

}

:root:root {

–subheading-text: var(–secondary-pillar);

–pullquote-text: var(–secondary-pillar);

–pullquote-icon: var(–secondary-pillar);

–block-quote-text: var(–article-text);

}

:root:root blockquote {

–block-quote-fill: var(–secondary-pillar);

}

@media (prefers-color-scheme: dark) {

:root:root:not([data-color-scheme=”light”]) {

–subheading-text: var(–darkmode-pillar);

–pullquote-text: var(–darkmode-pillar);

–pullquote-icon: var(–darkmode-pillar);

}

:root:root:not([data-color-scheme=”light”]) blockquote {

–block-quote-fill: var(–darkmode-pillar);

}

}

.content__main-column–interactive .element.element-atom,

.element.element-atom {

padding: 0;

}

#article-body > div .element-atom:first-of-type + p:first-of-type,

#article-body > div .element-atom:first-of-type + .sign-in-gate + p:first-of-type,

#article-body > div .element-atom:first-of-type + #sign-in-gate + p:first-of-type,

#article-body > div hr:not(.last-horizontal-rule) + p,

.content–interactive > div .element-atom:first-of-type + p:first-of-type,

.content–interactive > div .element-atom:first-of-type + .sign-in-gate + p:first-of-type,

.content–interactive > div .element-atom:first-of-type + #sign-in-gate + p:first-of-type,

.content–interactive > div hr:not(.last-horizontal-rule) + p,

#comment-body .element-atom:first-of-type + p:first-of-type,

#comment-body .element-atom:first-of-type + .sign-in-gate + p:first-of-type,

#comment-body .element-atom:first-of-type + #sign-in-gate + p:first-of-type,

#comment-body hr:not(.last-horizontal-rule) + p,

[data-gu-name=”body”] .element-atom:first-of-type + p:first-of-type,

[data-gu-name=”body”] .element-atom:first-of-type + .sign-in-gate + p:first-of-type,

[data-gu-name=”body”] .element-atom:first-of-type + #sign-in-gate + p:first-of-type,

[data-gu-name=”body”] hr:not(.last-horizontal-rule) + p,

#feature-body .element-atom:first-of-type + p:first-of-type,

#feature-body .element-atom:first-of-type + .sign-in-gate + p:first-of-type,

#feature-body .element-atom:first-of-type + #sign-in-gate + p:first-of-type,

#feature-body hr:not(.last-horizontal-rule) + p {

padding-top: 14px;

}

#article-body > div .element-atom:first-of-type + p:first-of-type:first-letter,

#article-body > div .element-atom:first-of-type + .sign-in-gate + p:first-of-type:first-letter,

#article-body > div .element-atom:first-of-type + #sign-in-gate + p:first-of-type:first-letter,

#article-body > div hr:not(.last-horizontal-rule) + p:first-letter,

.content–interactive > div .element-atom:first-of-type + p:first-of-type:first-letter,

.content–interactive > div .element-atom:first-of-type + .sign-in-gate + p:first-of-type:first-letter,

.content–interactive > div .element-atom:first-of-type + #sign-in-gate + p:first-of-type:first-letter,

.content–interactive > div hr:not(.last-horizontal-rule) + p:first-letter,

#comment-body .element-atom:first-of-type + p:first-of-type:first-letter,

#comment-body .element-atom:first-of-type + .sign-in-gate + p:first-of-type:first-letter,

#comment-body .element-atom:first-of-type + #sign-in-gate + p:first-of-type:first-letter,

#comment-body hr:not(.last-horizontal-rule) + p:first-letter,

[data-gu-name=”body”] .element-atom:first-of-type + p:first-of-type:first-letter,

[data-gu-name=”body”] .element-atom:first-of-type + .sign-in-gate + p:first-of-type:first-letter,

[data-gu-name=”body”] .element-atom:first-of-type + #sign-in-gate + p:first-of-type:first-letter,

[data-gu-name=”body”] hr:not(.last-horizontal-rule) + p:first-letter,

#feature-body .element-atom:first-of-type + p:first-of-type:first-letter,The first letter of the first paragraph in the article body uses a specific headline font, is bold, large, floated to the left, uppercase, and colored. Paragraphs following a horizontal rule have no top padding. Pullquotes are limited to 620 pixels wide.

Captions for showcase elements are positioned normally, with a full width up to 620 pixels. Immersive elements span the full viewport width, adjusting their maximum width and caption padding on different screen sizes. On very small screens, they align to the left edge.

For larger screens, the article header uses a grid layout. The headline has a top border, the meta information is positioned with a small top padding, and standfirst links are underlined with a custom color instead of having a bottom border.For screens with a minimum width of 61.25em, the first paragraph within the standfirst element will have a top border and no bottom padding. If the screen is also at least 71.25em wide, this top border is removed.

On screens at least 61.25em wide, figures within the furniture wrapper have no left margin, and inline figures with a specific role have a maximum width of 630px.

For screens at least 71.25em wide, the furniture wrapper uses a grid layout with defined columns and rows. A decorative line appears before the meta element, and the standfirst paragraphs lose their top border. A vertical line is added before the standfirst content.

On screens at least 81.25em wide, the grid layout adjusts its columns and rows. The decorative line before the meta element becomes wider, and the vertical line before the standfirst shifts slightly.

Headlines have a maximum width and font size, which change at larger screen sizes. Certain keylines are hidden on larger screens, and social and comment elements use the border color variable. Some meta container elements are not displayed.

The standfirst section has a negative left margin and relative positioning, with adjusted padding on medium screens. Its paragraphs have specific font properties.

The main media area is positioned relatively, has no top margin, a small bottom margin, and is placed in the ‘portrait’ grid area.The CSS code sets styles for various elements. Media elements within the furniture-wrapper class are set to full width with no horizontal margins. On larger screens (over 61.25em), these media sections have no bottom margin. On smaller screens (under 46.24em), they span the full viewport width, accounting for scrollbars, with a left margin of -10px. For medium-small screens (between 30em and 46.24em), the left margin is -20px.

Figure captions are positioned absolutely at the bottom with specific padding, background, and text colors. The first span inside a caption is hidden, while the second is displayed and limited to 90% width. On screens wider than 30em, caption padding increases. Captions with the “hidden” class are invisible.

A caption button is positioned at the bottom right, styled with a circular background and scaled icon. On screens over 30em, it moves slightly to the right.

For interactive content columns on very large screens (over 71.25em), a pseudo-element is adjusted in position and height. Headings within these columns are limited to 620px in width.

Color variables are defined for dark backgrounds and feature colors, with adjustments for iOS and Android devices in dark mode. On iOS and Android, the first letter of the first paragraph after specific elements in article containers is styled with a secondary pillar color. Article headers on these platforms also receive specific styling.For Android devices, set the article header height to zero in comment articles.

For both iOS and Android devices, apply padding to the furniture wrapper in feature, standard, and comment articles.

For both iOS and Android devices, style the content labels in the furniture wrapper of feature, standard, and comment articles with bold font, a specific font family, a custom color variable, and capitalize the text.

For both iOS and Android devices, style the main headline in the furniture wrapper of feature, standard, and comment articles with a font size of 32px, bold weight, bottom padding of 12px, and a dark gray color.

For both iOS and Android devices, position and size the image figures in the furniture wrapper of feature, standard, and comment articles, setting their width relative to the viewport.

For both iOS and Android devices, ensure the inner elements, images, and links within these figures have a transparent background and a width matching the viewport.

For both iOS and Android devices, style the standfirst text in the furniture wrapper of feature, standard, and comment articles.The CSS code sets styles for article containers. The standfirst section has top and bottom padding, with a negative right margin. On iOS and Android devices, the text inside uses specific font families. Links within this section are styled with a color from a custom property, underlined with an offset, and have a border color that changes on hover. The meta section has no margin, and elements like bylines and author links inherit these styles.For iOS and Android devices, the author’s name in article bylines should be displayed in the designated pillar color. Additionally, remove any padding from the meta information section and ensure that any SVG icons within it use the same pillar color for their strokes.

For showcase elements, the caption button should be a flex container, centered with 5px padding, and positioned 14px from the right edge, measuring 28px by 28px.

The main body of articles should have 12px of horizontal padding. Standard image elements within the article body, excluding thumbnails and immersive images, should span the full available width (accounting for padding and scrollbars) without any margin and maintain their aspect ratio. This styling also applies to the captions of these images.For iOS and Android devices, remove padding from image captions in feature, standard, and comment articles, except for thumbnail and immersive images. Make immersive images span the full viewport width, accounting for scrollbars.

Style quoted text with a colored marker using the new pillar color. Format links with the primary pillar color, an underline, and a specific offset, changing the underline color on hover.

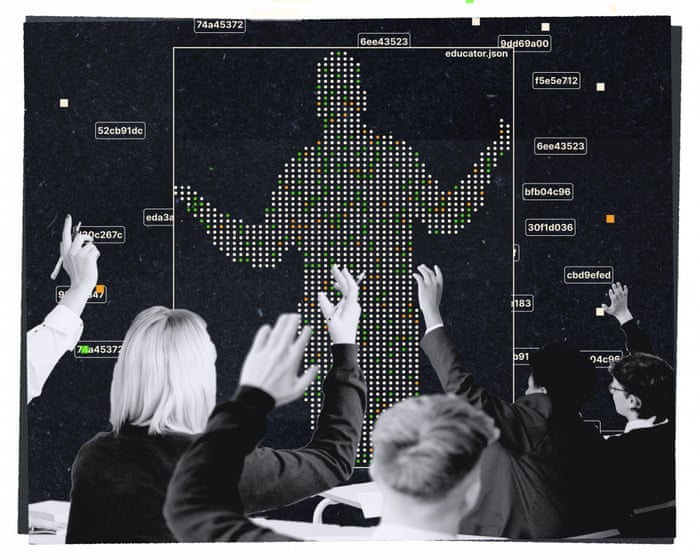

In dark mode, set the background of article headers to dark gray, label text to the new pillar color, and headline text to a specific border color, removing any background.The text appears to be CSS code, not standard English prose. It defines style rules for different article types and platforms, setting colors and styles for elements like text, links, and captions.This CSS code sets a dark background for article content on iOS and Android devices. It also styles the first letter of paragraphs following specific elements in feature articles on iOS.This appears to be a CSS selector targeting the first letter of paragraphs in specific article containers on iOS and Android devices. It applies to various article body sections, including standard, feature, and comment containers, following elements like `.element-atom`, `.sign-in-gate`, or `#sign-in-gate`.Two years ago, at 39, I started training to become a school teacher. My goal was to teach English—to help young people become better readers, writers, and thinkers, and to connect more deeply with literature. After 15 years as a freelance writer and novelist, I felt sure I had something to give. But the further I got into my training, the less certain I became. One question, in particular, haunted me throughout myWhat to do about artificial intelligence? The immediate dilemma is this: all students now have access to free online chatbots that can produce fluent, fairly complex writing on demand. What does that mean for teaching English? This question sits on top of a wobbly stack of timeless teaching puzzles: What is the real purpose of school? How should we teach? How do we know if we’ve succeeded? As a newcomer figuring all this out for the first time, adding AI into the mix felt like drinking coffee in the middle of a panic attack.

I began desperately searching for perspectives on AI and the English classroom wherever I could find them—in pedagogy podcasts, Substacks, and YouTube channels. My algorithmic feeds noticed this interest and started catering to it, offering me an endless stream of content—including endless ads from tech companies—all promising to help me think through these urgent questions and do right by my students.

I quickly learned this was a world of heated, often bitter, debate. On one side (to simplify a bit) were the AI rejectionists: teachers and education commentators who saw AI as nothing less than an existential attack by greedy tech companies on the core activities of the classroom. They argued that students need to learn how to push through difficulty: to read complex texts and develop complex arguments. They need to learn that these processes are full of friction and uncertainty, and they need to embrace that fact rather than run from it. Having access to a one-click writing machine makes it too easy to run away.

Rejectionists shared horror stories of students turning in AI-generated papers but being unable to answer the simplest questions about them, or citing sources that chatbots had completely made up. They pointed to studies suggesting that using chatbots dulls students’ reasoning skills or even hinders brain development. They raised ethical concerns about AI’s environmental impact, its reliance on copyrighted material, and the oligarchic tendencies of big tech. For most rejectionists, the solution was to create a classroom AI couldn’t touch. They talked about shifting to in-class essays, possibly handwritten. They debated bringing back oral exams and quizzes.

On the other side were the AI cheerleaders. I don’t mean their extreme counterparts—the mostly male tech executives who ranted about how AI would soon end schooling as we know it or claimed reading books was already a waste of time. I mean teachers and commentators who argued, often passionately, that despite its risks, AI also held great potential. Instead of being cheating machines, chatbots could act as powerful teaching assistants, engaging with every student at once, providing personalized feedback exactly when needed, and gently guiding each learner along their own path. From this perspective, the rejectionists’ instinct to avoid AI showed a lack of understanding of its possibilities and did a disservice to students, who would leave school without the tech skills they’d need for university and their careers.

As I waded through the arguments between rejectionists and cheerleaders, trying to make sense of their competing statistics and studies, my anxiety grew. I’ve noticed something about teachers, myself included. Because we take our responsibilities so seriously, we often fear doing the “wrong” thing—using ineffective or discredited methods, failing to give students what they need. We believe, often from experience, that good teachers can change lives; we also know that really bad teachers can leave a mark, especially in English, where they are ofteI understand the fear of being seen as out-of-touch, hiding in the classroom because we don’t quite fit in the ever-changing adult world. I know this fear well. I was determined not to fall for tech hype, but I also didn’t want to stubbornly dismiss a potentially useful new tool.

All I needed was a practical decision. I didn’t need to determine whether AI was an evil scam or the future of everything, nor did I need to decide its broader implications for education. I simply had to figure out what AI meant for the high school English classes I was about to teach. Nervously, I downloaded more podcasts, flooded my inbox with Substacks, and watched more YouTube videos, hoping that absorbing more information would increase my chances of getting it right—or at least ease my fear of getting it all wrong.

Last spring, I spent 15 hours a week observing a veteran English teacher, whom I’ll call Emily, at a large school in a Chicago suburb—the kind of place families move to specifically “for the schools.” Emily taught both 14-year-olds just starting high school and 18-year-olds nearly finished. What I saw in her classroom immediately made me sympathetic to the skeptics.

I witnessed all the disruptive effects you read about: fully AI-generated papers, AI-hallucinated quotes, and tense conversations between students and teachers about what could be proven. I sat with Emily as she graded papers, joining her in stressing over ambiguous cases, trying to distinguish student nonsense from AI nonsense, and genuine student improvement from AI-polished work.

I became a teacher largely because I wanted to spend time with young people’s writing, giving it the close attention it deserves. Watching over Emily’s shoulder, I saw how AI’s presence—or even its potential presence—interfered with this process. I became familiar with the unique despair of looking at a paper and trying to divine its origins rather than figuring out how best to respond to it. I also saw how teachers are constantly bombarded with offers of AI assistance, not just through email and social media ads, but even more so through AI tools integrated into their schools’ email and grading software.

Emily’s students all had school-issued laptops, and her computer had a program that allowed her to monitor every student’s screen simultaneously, displayed in a grid reminiscent of CCTV monitors. Using this program was always unsettling—Big Brother, c’est moi—and yet always transfixing. Some students didn’t use AI at all, at least in class. Others turned to it at every opportunity, feeding in questions almost reflexively. At least one student habitually entered every new subject into ChatGPT to generate notes for reference if called on. Often, I saw students funneled toward AI even when they weren’t necessarily looking for it. I grew accustomed to watching a student Google a topic like “key themes in Romeo and Juliet,” read the AI-generated answer now appearing at the top of most search results, click “Dive deeper in AI mode,” and suddenly find themselves chatting with Google’s chatbot, Gemini, which was always ready to advertise its own capabilities: “Should I elaborate on one or more of these themes? Should I draft a first paragraph for an essay on the subject?”

Emily told me that most of the reading she assigns now has to happen in class, and she reads much of it aloud, especially at the beginning.I was shocked. Yes, I’d read countless articles about the “contemporary reading crisis,” but it was still dismaying to see firsthand how much teen reading had declined. When I decided to become a teacher, I had romantic visions of guiding students—like a literary captain—through complex texts and their connections to life. In those visions, the actual reading mostly happened outside the classroom. So what did it mean for my teaching ambitions that so many of my students seemed unable to read on their own? And that when it came time to write, so many instinctively turned to AI? I wondered, gloomily, if I’d signed up for a profession that unstoppable historical forces were about to wipe out.

But then I watched Emily read to the class, and my spirits lifted. For a writer, describing supposed classroom magic is a bit like describing sex—the attempt often produces sentences that are both cringeworthy and unconvincing. Still, I feel obliged to tell you: reading time was sometimes magic.

Shortly after I arrived, the younger classes started All Quiet on the Western Front. At first, students were skeptical: “We’re really reading another whole book?” Then, with Emily’s guidance, they found their footing: World War I, young German soldiers, trench warfare, loss of innocence, the psychological toll of living with death, the disconnect from home. Laptops were put away, and phones were in pouches by the door, as per school policy. Everyone knew they could raise a hand anytime to ask for clarification or share an observation. Sometimes, Emily paused to highlight moments she suspected were confusing—things students might be too shy to admit or misreadings they didn’t even realize they were making—or sentences ripe with multiple interpretations. Day by day, in almost imperceptible shifts, the book transformed from an intimidating monolith into a familiar companion.

Eventually, the students stopped complaining and got into it. They wanted to know how it would end, gasped at dramatic turns, and wondered aloud—with feeling—why characters acted as they did. Why had Remarque written it that way? Then, one day, it happened: a room full of American 14-year-olds in 2025 was fully inside a story about German 19-year-olds in the 1910s, viewing the book through the lens of their lives and their lives through the lens of the book. I could feel it in the air—the room quietly crackling with energy flowing between students, teacher, and words put to paper almost a century before.

The AI antics I’d witnessed had been depressing; the AI-free teaching I saw was inspiring. Before my observation period ended, Emily let me lead some readings myself, and a couple of times I experienced a full-body high. I felt ready to shout from the rooftops: I’m an AI rejectionist—and proud of it!

Over the summer, though, my doubts crept back. As stirring as reading time in Emily’s class had been, I knew it hadn’t actually answered my questions about AI in the classroom. I knew that in the fall, I’d return as a student teacher, taking on most of the lesson planning and grading. I had more decisions to make, especially about writing. Given my concerns about chatbots, what would I have students write? And when, and how?

Because I’d consumed—and was still consuming—so much content about AI and teaching, I could stage an internal debate between radically different perspectives.

Me: “Reading together as a class, without any AI or devices, felt great. I know that for sure. I want to use that as my starting point.”

Also me: “But what did the students really learn? How do you know?”

Me: “Well, I got to he—I was reflecting on how their thoughts seemed to evolve in real time.

Then I asked myself, “But did every single student participate?”

I admitted, “Well, no. But they all did a lot of writing afterward—by hand, in the classroom—and I was able to read that.”

I pushed further: “Having read what they wrote, do you really think every student learned as much as they could have? Did they all learn everything you hoped?”

I had to concede: “Well… I guess not. Not all of them. Not everything.”

Then I wondered: “What if, after your AI-free reading and discussion, when students sat down to write, they each had access to an AI chatbot that could give them feedback tailored exactly to their comprehension level and learning style? What if you, the teacher, could train that chatbot to align with your goals for the assignment and the class?”

I replied, “But that’s already my job—to give personalized feedback.”

I countered: “How much time do you really have for that? Can you intervene every single time it would help? What about when students are writing at home, or the night before an assignment is due and they’re completely off track? Why wouldn’t you want them to know that?”

That left me sweating.

In the name of due diligence, I started experimenting with AI chatbots, including ones designed for classrooms or with a “student mode.” First, I tested their ability to do the worst thing: take one of my assignments, add simple instructions like “Make it sound like a 15-year-old wrote it,” “Include some realistic typos and grammatical errors,” and “Don’t make it too polished,” and generate something indistinguishable from student writing. Back in 2023, it was a comforting belief that teachers could always spot machine writing. I can now report that, for better or worse, that’s no longer true.

Next, I tested these chatbots on less obviously problematic uses, like commenting on drafts or answering questions about assignments. Performance varied, but some were very good. In fact, I was so impressed that I started occasionally feeding them drafts of my own magazine pieces, sometimes getting instant, genuinely useful feedback. Sitting at my computer, I felt an imaginary squad of cheerleaders gathering behind me, ready to declare victory.

I kept returning to my memories of reading time in Emily’s classroom, trying to figure out what felt so special. Part of it, I decided, was how the activity structured everyone’s attention. With all laptops and phones put away, everyone was fully engaged the whole time. It was truly astonishing to see.

Just kidding—it was school. Some portion of the class’s attention was always on the things teenagers think about: next period’s test, weekend plans (or the lack thereof), whether their crush liked them back, their parents’ argument the night before, or ICE officers in the neighborhood. But thanks to the structure of reading time, the opportunity to focus was always within reach. A student could find their way back to it without being sidetracked by the temptations of a bright, scrollable screen—an always-on portal to more distractions.

It was good, I was sure, to have some enforced separation between learning and the lure of technology. My instinct was to enforce that same separation in their writing process. Is it possible to design a chatbot that gives reliably useful writing feedback? Maybe. Can chatbot feedback be regulated so it doesn’t become a crutch? Probably. Can a chatbot be instructed not to offer one-click rewrites? Yes. But every high school student—busy, overwhelmed, nervous about writing, eager to finish their schoolwork—Anyone who has taught writing in recent years—whether during the day, at night, or on weekends—knows that on the public internet, these time-saving tools are just a click away. I couldn’t erase chatbots from my students’ world any more than I could take away their phones. All I could do was decide how much to steer them toward these tools and how much to nudge them toward other experiences.

One part of me thought: This fall, I’ll try to keep things as AI-free as possible. What students need most are sustained experiences of reading and writing—with all the friction and uncertainty those involve—free from tech distractions.

But another part countered: Learning to handle tech distractions is part of life. And surely they’ll need AI in the future to enhance their thinking and stay competitive.

Maybe, I wondered. But can you enhance your thinking if you haven’t yet learned how to think? Don’t I keep reading interviews with Silicon Valley executives who strictly limit their own kids’ screen time?

Then again: Is it possible you’re projecting your own worries about how much time you waste online, and imagining you’d be a better, more successful writer if someone would just turn it all off for you?

That’s possible, yes.

Freud called teaching one of the “impossible professions.” You can never declare total success or even know the full effects of what you’re doing. (Worse, he wrote: “One can be sure beforehand of achieving unsatisfying results.”) All fall, I reminded myself of this daily, trying to feel better about how deeply unsure I was of almost everything I did.

When I devoted class time to reading, it felt wonderful. But then I worried that because it felt so good, I was overdoing it—the teaching equivalent of trying to be healthy by eating only spinach. When I had students write their essays entirely in class, I felt virtuous for banning big tech’s brain-numbing shortcuts. (The image of Ian McKellen as Gandalf, standing firm before the towering Balrog and shouting “YOU SHALL NOT PASS!” became a kind of companion.)

Then, at night, reviewing the day, I’d worry that by confining writing to class time, I wasn’t exposing students to the very aspects of writing I value most: the intertwined frustrations and joys of revising, the movement from draft to draft, the experience of living with a piece over time, letting it color and be colored by the rest of your life.

When I assigned more ambitious projects and gave students the extra time they required—including, necessarily, unsupervised time—I felt virtuous again. But then my mind would fill with visions of my students at home, pasting my instructions into ChatGPT, Gemini, Claude, Copilot, Grammarly.

I spent a lot of time trying to design unconventional writing assignments—so well crafted, so genuinely interesting, so unlike the rigidly formulaic essays of the past—that students wouldn’t want to skip them.

Imagine you work in Hollywood: the book we’ve just read is being made into a movie, and you have to choose the soundtrack. Explain which songs go with which scenes and why, showing you understand each scene’s tone and role in the larger story.

Write your own version of Binyavanga Wainaina’s satirical essay “How to Write About Africa,” replacing “Africa” with something important to you that you feel is often misrepresented, and in doing so, demonstrate your understanding of Wainaina’s rhetorical choices.

I loved reading these assignments. I loved seeing how students understood what we were reading. I loved hearing their music. I loved learning about their relationships to gender, their cultural backgrounds, their neighborhoods, and jotting down my own responses. But this love didn’t stop me from worrying—And who knows—maybe chatbots could have helped. I’m sure in some cases they did. For every assignment, I caught a few students using them to cheat. When I asked about it, they usually admitted it right away, blaming time pressure and not understanding what I’d asked. I urged them: if you don’t understand, just ask me! But I couldn’t help wondering: what if I had trained a chatbot to answer their questions in a way I approved of? Would fewer have resorted to cheating? (Did I even know how many actually had?) Could their writing have improved faster? Or would more of them, already tempted by the shortcut, have happily taken it? I wanted to trust them, but I felt I had to set limits. The decisions felt impossible, and it was little comfort that an Austrian psychoanalyst with a taste for cocaine had said something similar back in 1937.

Besides reading, there was one other classroom activity that felt relatively safe from all these doubts: when we talked directly about AI. I tried to explain my own thoughts on the subject—including my uncertainty—and also asked for theirs. I gave my older students questionnaires about AI, asking what tools they used, how long they’d been using them, and how they felt about it. A few told me they’d never used AI and never wanted to—it creeped them out. Some worried about what it meant for jobs. Others described using chatbots to create flashcards and review questions, get fashion advice, edit social media posts, replace Google searches, find cooking tips, get workout plans, seek health advice, and even get health advice for their pets.

Almost everyone who filled out the questionnaire expressed some fear—or at least awareness—that AI could weaken their ability to think for themselves. I realize some may have sensed my skeptical stance and told me what they thought I wanted to hear. I also knew some were probably leaving out things they didn’t want to share, like using chatbots to ease loneliness. Still, their concerns about their own thinking felt genuine.

It wasn’t always clear, though, that students really understood what original thinking meant—or when they were bypassing it. More than one expressed a firm commitment to developing their own ideas, then a few lines later described “responsible” AI uses that, from my perspective, undermined exactly what they hoped to build. I’ll have AI give me a thesis statement, but then I’ll write the paper. I’ll have AI suggest a few thesis statements, pick one, and have AI outline it. I’ll have AI write a first draft, then change things to make it original.

Only one student admitted using AI to complete an entire writing assignment he didn’t want to do. He explained he meant no personal offense—his life was busy, and “some teachers” gave repetitive assignments he felt weren’t worth his time. That same student’s father approached me at a parents’ night to say that while he understood my AI policies, he was also worried. In his own work, he saw how much employers valued AI skills in hiring and promotions. Shouldn’t his son’s education be encouraging that fluency?

I got a strong sense that even among students who used AI the most, their understanding of how it worked was very limited. One day, on a whim, I offered a hefty chunk of extra credit to anyone who could explain—without looking at a screen—in plain language how chatbots generate text. No one could. I also shared an email I’d received from the US Authors…I asked Guild how to find out if I’m eligible for compensation from a class-action lawsuit filed by book writers against Anthropic, the AI company behind Claude—a chatbot some writers had even called their favorite. I wanted to know on what grounds Anthropic might owe writers like me money. There was no answer.

So I decided to bring it up in class. It felt a little awkward. I tried to explain in simple terms where chatbot text comes from, but I quickly realized my explanation wasn’t as clear as I’d hoped. Still, it felt worthwhile. I could sense my students—and honestly, myself—shifting into a higher gear of attention as we dug into questions about the world and our place in it.

Looking ahead, I expect I’ll look for more opportunities to bring AI into classroom discussions, even as I remain very cautious about introducing AI tools themselves. I want students to get better at thinking not just about literature, but about all the language they encounter—in ads, political speeches, op-eds, and social media. If language models are going to shape how they engage with the world, I want them to be able to question how those models work. I want them to understand the business models of AI companies, how those models influence chatbot behavior, and the role played by low-wage workers in producing chatbot outputs. I want students to learn about—and reckon with—the experiences of people whose interactions with chatbots have ended in self-harm, psychosis, or suicide. I want them to know that several AI executives have openly predicted that AI growth could eventually cover much of the planet with data centers, and I want to hear what they think about that.

On my last day of student teaching, I stayed late grading a stack of work from my younger students. We’d spent several weeks reading short stories about the complicated relationships people have with teachers, mentors, and role models. Instead of essays, I’d asked them to write their own short stories—pulling characters from across the unit and placing them in original scenarios that reflected the themes we’d explored.

I let them work on these stories outside of class and submit them digitally, but I also had them write during class time and meet with me to explain their choices. As far as I could tell, only one or two had clearly handed the assignment off to a chatbot (which, if you’re wondering, did a decently passable job).

Overall, I was delighted by the inventiveness and quality of my students’ stories, and by the depth of understanding they showed for other authors’ work. To my surprise, many of them drew on a story that had been widely dismissed in class as “too weird”: Mark Twain’s The Mysterious Stranger. In the version we read—Twain rewrote it at least three times—a group of boys falls under the influence of an angel named Satan (not that Satan, he assures them; that’s his uncle). This Satan knows all kinds of fascinating magic, which at first delights the boys. But in the end, it’s a horror story. Beneath his charming surface, Satan views humanity with indifference, scorn, and hostility. The more time the boys spend with him, the more they risk absorbing that same attitude.

Several students wrote their Satans acting in ways that unmistakably mirrored the behavior of today’s chatbots. Satan offered to do characters’ homework, polish their work, and free up their time for more enjoyable activities. They did this, I swear, without any prompting from me. Despite my own skeptical leanings, I’d never thought to view Twain’s Satan that way.

The hours I spent reading those stories were a joy, largely untouched by the AI anxieties that had occupied so much of my mind that semester. The biggest threat to that joyI was constantly bombarded with requests from the AI tools built into my word processor, my email, and my assignment management system. Did I want the machine to take notes on my students’ stories? Grade them for me? Sort them into categories based on patterns it found?

I didn’t. I wanted to read what my students had written myself. All semester, I’d been telling them that writing is a gift humanity created—a way to understand ourselves and each other across distance and time. How could I turn around and hand the job of responding to their work over to an algorithm? I printed the remaining stories, closed my laptop, and stepped away.

Did I catch every case of AI-assisted cheating? Certainly not. I’m sure some teachers—both skeptics and enthusiasts—would call me naive. But I knew my students; that’s the heart of teaching, isn’t it? I’d watched their drafts take shape in class and had them explain their stories—quirky, funny, moving stories—to me in person. That had to count for something.

I knew I might be fooling myself. Still, I felt a quiet sense of calm. I’d done what I believed was right for that semester. In the future, my approach will undoubtedly change in ways I can’t yet foresee. That’s part of the job, too. I picked up my pen, took the next story from the stack, and started to read.

Frequently Asked Questions

Of course Here is a list of FAQs about Teacher vs Chatbot My Journey into the Classroom in the Age of AI designed to be clear concise and conversational

Beginner Definition Questions

1 What does Teacher vs Chatbot even mean

Its not about one replacing the other Its about exploring the unique strengths of human teachers and AI tools and how they can work together to create a better learning experience

2 Is AI like ChatGPT going to replace teachers

No AI cant replace the human connection empathy mentorship and ability to inspire that a teacher provides Its a powerful tool not a substitute for a person

3 What can a chatbot do that a teacher cant

A chatbot can provide instant 247 answers to factual questions generate endless practice examples on demand and offer personalized tutoring on specific skills without getting tired Its like having a limitless patient practice partner

4 What can a teacher do that a chatbot cant

A teacher understands the why behind a students confusion builds trust and a supportive classroom community offers emotional support designs creative and holistic projects and adapts lessons based on subtle cues a machine would miss

Benefits Integration Questions

5 What are the biggest benefits of using AI in the classroom

For Teachers Saves time on lesson planning creating materials and drafting emails It acts as a brainstorming partner for new ideas

For Students Provides personalized immediate feedback on practice work helps overcome the fear of asking silly questions and supports students who need extra help outside of class hours

6 Can you give me a simple example of how a teacher might use a chatbot

Absolutely A teacher could ask a chatbot Generate five discussion questions for 10th graders about the theme of justice in To Kill a Mockingbird or Create three different versions of a math word problem for multiplying fractions with varying difficulty

7 Doesnt this just encourage cheating

It can if not addressed directly Thats why the teachers role is crucial The focus shifts from just getting an answer to